AI is no longer an experiment at the edges of automotive marketing. It is embedded in day‑to‑day creative workflows, from image clean‑up and background swaps to fully generated video, copy, and dynamic offers.

The real risk is not only how content is made, but how it is perceived, trusted, and interpreted by consumers. Poorly governed or overly synthetic outputs can misrepresent vehicles and offers, undermine credibility, and erode brand equity. That’s prompted Ansira to step back and ask how brands can get ahead of this — not react to it — and ensure strong guidance is in place as adoption accelerates.

At Ansira, our stance is simple: Act as stewards of the brand. Every execution, AI‑assisted or not, should meet the same standards of accuracy, realism, and trust that have always underpinned effective automotive advertising.

Why responsible AI in automotive creative matters now

Brands across all verticals are rapidly testing AI for imagery, video, and copy; automotive is no exception. At the same time, consumer expectations around transparency and trust are rising.

A 2025/2026 NielsenIQ study found that when AI involvement in an ad is disclosed or becomes obvious, ad credibility and purchase intent can drop by 15–20%. Consumers in the study often described AI‑generated ads as “annoying,” “boring,” or “confusing,” and they were quick to spot them. That negative halo can extend from creative execution to the brand itself.

However, when AI is used “invisibly” to enhance image quality, clean up backgrounds, or personalize a deal, consumers generally don’t mind. The issue is not AI in principle; it’s how visible, synthetic, or misleading the output feels.

Meanwhile, generative tools make it trivially easy to scale:

- Huge volumes of visuals and video where vehicles, people, and even basic physics are subtly wrong.

- Synthetic “dealerships,” staff, and customers that never existed, and may not reflect your real network or values.

- “Cheap‑feeling” creative that chips away at a brand’s premium or safety‑led positioning over time.

AI isn’t breaking creative guidelines as much as stress‑testing them, highlighting where they hold firm and where they fall silent.

How existing generative AI in automotive guidelines hold up

Most original equipment manufacturers (OEMs) and dealer programs already have robust rulebooks for imagery, pricing, disclosures, and tone. When we map typical clauses against common AI use cases, we see three patterns:

- Explicit coverage: The guideline already clearly applies to AI (e.g., “no distortions or alterations,” “no misleading claims”).

- Implicit coverage: The spirit of the rule applies, but the language doesn’t mention modern tools or synthetic generation.

- Silence: There is little or no guidance on synthetic people, locations, or obviously AI‑styled executions.

Below are key pressure points where AI is stretching today’s guidelines in automotive creative.

Pressure point #1: Image & video quality and distortions

What AI does

AI makes it easy to output high volumes of visuals where vehicles, people, and environments are slightly “off” — warped panels, impossible lighting, distorted badges, or physics that don’t make sense. At scale, that normalizes inaccurate vehicles, unsafe scenarios, and low‑quality execution.

Why it creates risk

- Conflicts with existing standards that require high‑quality, undistorted vehicle photography and video

- It can misrepresent models, trims, or equipment, especially when AI recolors vehicles or adds/removes features without clear disclosure

- Undermines perceived quality, particularly for premium or technology‑led brands

Guideline considerations

- Treat any “distortions or alterations” as out of bounds when they clearly violate brand standards, regardless of the tool used.

- Make it explicit that all editing and image‑generation methods (present and future) are covered by the same standards, not just specific software brands. (e.g. Photoshop).

- For video, spell out that enhancements cannot alter the apparent capabilities or behavior of vehicles, people, or locations in ways that could mislead or glamorize risk.

Pressure point #2: Third‑party & location representation

What AI does

AI can fabricate dealerships, interiors, neighborhoods, or cityscapes that look plausible but don’t exist. It can also alter signage and uniforms in ways that imply relationships or locations that are not real.

Why it creates risk

- Misrepresents dealership identity and facilities when synthetic exteriors/interiors are presented as real stores

- Implies unauthorized partnerships, affiliations, or co‑branding (e.g., added logos, joint signage) that haven’t been approved

- Creates potential regulatory and franchise‑relationship issues when store identity or geography is misrepresented

Guideline considerations

- Extend representation standards beyond vehicles to explicitly cover people and locations.

- Prohibit visuals that imply unauthorized partnerships or affiliations via signage, uniforms, or logos, no matter how they were created.

- Add clear expectations for synthetic, illustrated, or stylized people and environments, including how they may (or may not) stand in for real locations.

Pressure point #3: Brand integrity, disparagement, and tone

What AI does

Generative tools can crank out endless “cheap‑feeling” creative: meme‑driven layouts, plastic or uncanny humans, over‑bright color palettes, and shouty copy that avoids specific banned phrases but still erodes perceived quality.

Why it creates risk

- Signals a “we don’t care enough” mindset, which is especially damaging for premium or safety‑led marques

- Breaks emotional connection as viewers focus on the weirdness of the people or the scene instead of the story

- Creates a disconnect between the brand’s stated values (e.g., safety, quality) and what the creative glamorizes or trivializes on screen

Guideline considerations

- Evolve “no disparagement” clauses from purely verbal to verbal + visual tone: execution styles should not mock, cheapen, or trivialize the brand.

- Embed a “fit for brand” review lens into pre‑approval: Does this AI‑enabled execution feel consistent with how the OEM wants to show safety, quality, and human experience, not just with what is factually true?

The creative risk line: Safety, realism, and representation

AI raises difficult questions about where to draw the line between acceptable stylization and material misrepresentation.

AI‑fabricated driving and safety scenarios

AI can produce spectacular, cinematic driving sequences that ignore real‑world physics and conditions, perfect traction in unsafe weather, unrealistic maneuvers, or empty “video‑game” roads.

This creates a gap between the brand’s stated safety values and what it visually glamorizes, which regulators and consumers increasingly view as irresponsible, especially if there is any implied performance or safety claim.

Overly synthetic people and emotions

AI‑generated people often look uncanny, with odd movements and inconsistent proportions. Reactions and crowds may loop in ways that feel obviously fake on repeat viewings, and training data can skew toward narrow demographics by default.

The result: creative that signals cheapness, breaks emotional connection, and may introduce representation issues that sit outside traditional “vehicle‑centric” rules but still affect inclusion and brand safety.

Questions to assess your brand guidelines on generative AI in automotive

Based on compliance work across OEM programs, it is recommended to stress‑test your guidelines with questions in seven areas:

- Image and video quality/alterations

- Do guidelines require vehicle imagery to be high‑quality and free of distortions or unnecessary labels, regardless of how the image was created?

- Is the wording broad enough (“any distortions or alterations”) to cover all editing and generation methods, not just named tools?

- AI disclosure & transparency

- Is there guidance on when and how AI involvement should be disclosed to consumers?

- Is it clear who owns that disclosure (the OEM, dealers, or agencies) and how jurisdictional differences are handled?

- “Illustration only” and stylized depictions

- When exact models/trims/colors are not shown (e.g., concept art, alternate color, higher trim), do guidelines require a clear “for illustration only” type of disclosure?

- Realism and safety depiction

- For program‑funded dealer advertising, are dealer‑created spots required to be based on real vehicles in live‑action driving?

- Do rules ban edits or effects that materially alter the apparent behavior of vehicles, people, or locations in ways that could mislead or glamorize unsafe scenarios?

- Monitoring, pre‑approval, and pattern detection

- Are AI‑assisted assets treated under the same umbrella as “altered, distorted, or inaccurate art,” rather than a special exception?

- Do you have examples and pattern‑based reviews in place to catch emerging AI trends before they scale across the network?

- Stylized, cartoon, and illustrated imagery

- Is there explicit guidance on when cartoon or illustrated vehicles and people are acceptable and when exaggerated forms cross the line into misleading or brand‑eroding territory?

- Governance across people, locations, and tone

- Do guidelines extend beyond vehicles to cover realistic, respectful portrayals of staff, customers, and facilities?

- Is there a clear expectation that tone, style, and visuals must support the brand’s positioning, not mock or cheapen it?

From rules to practice: Ansira’s compliance playbook

The gap between policy and day‑to‑day execution is where risk lives. Ansira’s fund and compliance programs are designed to bake brand standards into every model, including AI‑assisted creative, without risking brand or regulatory compliance.

Key elements include:

- Co‑op/MDF programs: Pre‑approval and proof‑of‑performance tied to eligible activities and brand‑safe creative, including AI‑assisted executions

- Compliance programs: Ongoing reviews of in‑market creative, with funds tied to guideline adherence around pricing, imagery, tone, and disclosures

- Spend review: Audits of how dollars are used over time, withholding or charging back funds when spend or creative falls outside the rules

- Commitment and experience programs: Incentives for partners who deliver the OEM’s desired ownership experience, not just those who run ads

- Rebates and rewards: Financial reinforcement for compliant enrollment, execution, and proof, aligning behaviors across the ecosystem

Monitoring is the engine of these programs. Our teams can conduct random, proactive sweeps of dealer and partner advertising across channels, with low additional lift for dealers. Ansira handles all asset harvesting, review, and feedback. Findings are always evaluated against the OEM’s own rulebook to keep enforcement consistent and defensible.

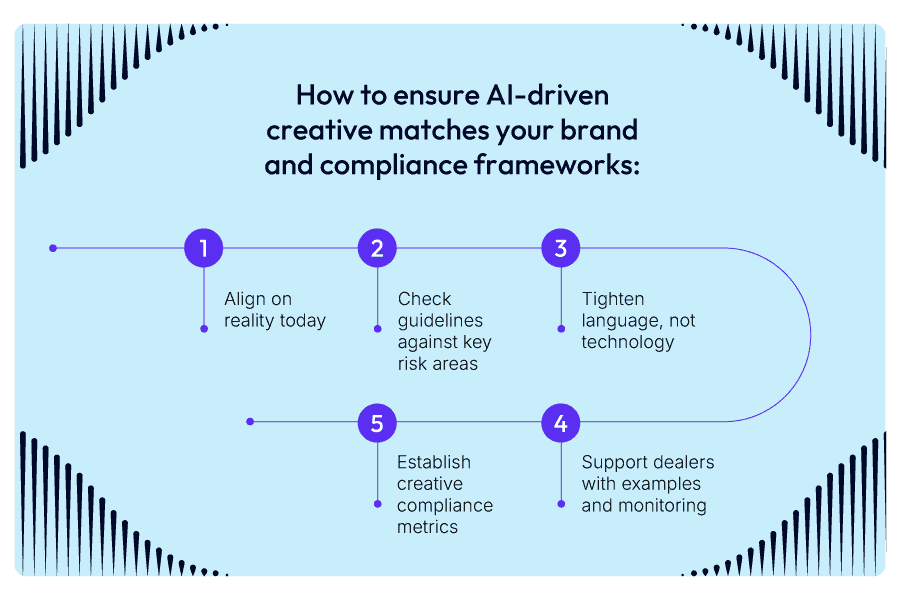

Recommended next steps for OEMs and dealer networks

To align AI‑driven creative with your brand and compliance framework, we recommend five practical steps:

1. Align on reality today

Audit your last 90 days of pre‑approvals and claims to identify where AI‑generated or AI‑altered creative is already appearing in your network, and whether existing processes caught it.

2. Check guidelines against key risk areas

Map your current brand guidelines against the risk areas in this framework (image quality, safety depiction, representation, tone, disclosure, stylization, and monitoring) to see where you have explicit coverage, implicit coverage, or silence.

3. Tighten language, not technology

Update guideline language to be method‑agnostic, covering “any distortions, alterations, or synthetic generation” rather than naming specific tools, so your standards stay durable as technology evolves.

4. Support dealers with examples and monitoring

Build a “good vs. out‑of‑bounds” reference library of AI‑assisted creative examples and integrate it into dealer training and pre‑approval workflows. Expand monitoring to catch emerging patterns before they scale.

5. Establish creative compliance metrics

Define and review AI‑related creative compliance metrics quarterly so you can spot trends early and adjust guidance, enablement, and enforcement in a coordinated way.

Our POV: Stewardship in an AI‑enabled future

AI will continue to accelerate speed and scale in automotive creative. The brands that win will not be those that chase every new capability, but those that pair innovation with stewardship, protecting accuracy, realism, and trust even as tools evolve.

Ansira’s teams are here to help OEMs and their networks navigate this shift, clarifying how AI‑generated and AI‑altered creative fits within existing guidelines, identifying gaps, and designing fund and compliance programs that keep your brand safe while your creative stays competitive.

Responsible AI in creative is not a new rulebook. It is a sharper lens on the standards you already have and a renewed commitment to living them, execution by execution.

Get in touch with one of our experts to learn more about Ansira’s automotive consulting services.